Best Practices for Securing AI Systems with Authorization

Gal Helemski

June 30, 2025

As organizations rapidly adopt AI and LLM-based systems, security concerns have become paramount. While the race to implement AI solutions accelerates, many companies face a critical challenge: how to balance innovation speed with robust security controls. This blog explores the essential practices for securing AI systems through proper authorization and access controls.

The Growing Threat Landscape

The adoption of AI systems has introduced unprecedented security challenges. Unlike traditional applications, AI systems operate at a much larger scale and are connected to vast internal repositories of data and documents. This connectivity dramatically increases the potential for data exposure and regulatory compliance failures.

Key Challenges Organizations Face

Addressing Regulatory & Compliance – The expanded attack surface and data exposure potential make regulatory compliance significantly more challenging, requiring an efficient approaches to data protection and privacy.

- Security vs. Agility Trade-offs – Organizations often struggle to balance the need for rapid AI deployment with essential security requirements. This tension sometimes leads to bypassing security controls or delaying AI adoption altogether.

- Amplified Data Exposure Risks – AI systems present the same fundamental security challenges as traditional systems, but on a much larger scale. The interconnected nature of AI systems with internal data repositories creates exponential exposure risks.

- Addressing Regulatory & Compliance – The expanded attack surface and data exposure potential make regulatory compliance significantly more challenging, requiring an efficient approaches to data protection and privacy.

Industry Recognition of AI Security Challenges

The severity of these challenges is reflected in recent industry reports:

- OWASP Top 10 for LLM Applications: A significant portion of identified vulnerabilities relate directly to access controls and authorization issues.

- Gartner Predictions: Forecasts indicate that 50% of cybersecurity attacks will stem from insufficient or improperly implemented access controls.

- NIST Guidelines: Recent risk management guidelines for AI systems specifically emphasize the need for proper access controls and data protection.

Adopting AI Responsibly – The Four Critical Control Points:

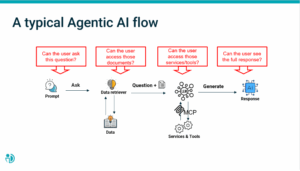

To effectively secure AI systems, organizations must implement controls at four critical points in the AI workflow:

1 . Question Control (Prompt Filtering)

Controlling user prompts represents the first line of defense, following the security principle of implementing controls as early as possible in the process.

2. Data Access Control

Organizations should filter data queries at the source rather than post-retrieval. This ensures that when a Retrieval-Augmented Generation (RAG) system identifies the top most relevant documents, those documents are both relevant to the query and accessible to the specific user.

3. Service and Tool Access (MCP Protocol)

The Model Context Protocol (MCP) enables AI systems to access various services and tools. Organizations should consider granular control over which services and tools users can access.

4. Response Masking

Masking is designed to address protection against sensitive information disclosure and can adapt to specific regulatory requirements. Organizations should use masking to redact sensitive data (such as PII) based on user identity and organizational policies.

How to achieve control?

Effective AI security requires comprehensive policy management that can address all four control points consistently. This approach enables organizations to:

- Implement zero-trust, identity-first security principles

- Maintain consistent access controls across the entire AI workflow

- Adapt quickly to changing regulatory requirements

- Scale security controls with AI system growth

The time to adopt AI is now, but it must be done responsibly. Organizations cannot afford to compromise security for speed, nor can they allow security concerns to prevent AI adoption entirely. The solution lies in implementing comprehensive, policy-driven access controls that secure AI systems without hindering innovation.

By addressing the four critical control points and implementing proper authorization frameworks, organizations can confidently deploy AI systems that are both powerful and secure. The key is to recognize that AI security is not just about protecting data—it’s about enabling responsible innovation that drives business value while maintaining trust and compliance.

Remember: in the rapidly evolving landscape of AI security, the organizations that successfully balance innovation with security will be those that implement comprehensive access controls from the very beginning of their AI journey.

Ready to Future-Proof Your AI Strategy?

Don’t wait until AI security becomes a liability. Start with access control—done right.

Explore how PlainID can help your organization secure AI at scale with externalized, policy-based access control.