Challenging the Status Quo: Why Agentic AI Demands a New Approach to Access Control

Challenging the Status Quo: Why Agentic AI Demands a New Approach to Access Control

The age of agentic AI is no longer a distant forecast; it’s a present-day reality, promising to revolutionize enterprise productivity. Yet, this new wave of autonomous technology carries a hidden and profound security risk that traditional controls were never designed to manage. This isn’t just a hypothetical concern. A forward looking report from the Cloud Security Alliance, “Agentic AI Identity & Access Management: A New Approach,” quantifies the urgency, revealing that with 60% of enterprises expected to utilize AI agents within the next year, organizations are deploying these powerful systems into environments secured by outdated rules. This mismatch creates a critical authorization blind spot, leaving a wide-open door for data exposure, compliance failures, and privilege escalation on an unprecedented scale.

The New World: What Makes Agentic AI Different?

To understand why old rules no longer apply, we must first appreciate what makes agentic AI a paradigm shift. Unlike traditional applications that follow predictable workflows, AI agents operate with unprecedented autonomy, adapt their behavior based on new information, and interact across countless systems simultaneously. They are evolving from simple, interactive tools into fully autonomous “digital employees” that are deeply integrated into organizational structures.

This evolution brings an explosive growth in complexity. According to the CSA paper, security teams will soon be responsible for managing not hundreds of human users, but tens of thousands of dynamic, ephemeral agent identities. These agents can generate 148 times more authentication requests than human users, placing an impossible strain on legacy infrastructure. The core challenge is simple: we are trying to govern dynamic, machine-speed systems with static, human-speed controls.

Why Legacy Access Control Shatters Under Pressure

A recent report from the Cloud Security Alliance (CSA) on Agentic AI IAM confirms this dangerous reality, outlining precisely why traditional frameworks like Role-Based Access Control (RBAC) and OAuth are insufficient.

The Flaw of Coarse & Static Permissions

Traditional IAM is built on pre-defined roles and permissions that are too broad and rigid for the fluid needs of AI agents. An agent’s “job” can change from moment to moment, yet organizations assign it static, long-lived permissions, creating a massive state of over-privilege.

- What Can Happen? Imagine a financial analysis agent granted a static “read access” role to an enterprise data lake. A threat actor uses a prompt injection attack to instruct the agent to find and summarize all documents related to a confidential, upcoming merger. The agent, simply following its new instructions, accesses the sensitive files and exfiltrates the summary. Your legacy IAM system sees nothing wrong—a valid agent used its valid permissions. It is completely blind to the context and intent, and a catastrophic data breach occurs.

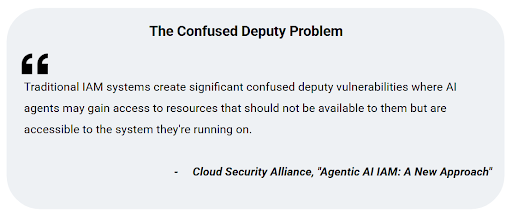

This scenario is a classic example of what the CSA paper identifies as the ‘Confused Deputy Problem’. This occurs when an agent, which has broad system-level permissions, is tricked into misusing its authority by a malicious actor. Because the traditional IAM system can’t distinguish between the agent’s legitimate function and the attacker’s malicious intent, it allows the action, turning a trusted agent into an unwitting accomplice in a data breach.

The Danger of Limited Context Awareness

This leads to the next critical failure: the lack of context. Traditional systems make access decisions based on a simple check: does this user’s role have permission to access this resource? They have no understanding of the runtime context, the agent’s intent, or the real-time risk level of a request. An agent accessing a single customer record at 10 AM is treated the same as one attempting to download ten thousand records at 3 AM. This binary, context-blind approach is an open invitation for abuse.

The Crisis of Scalability and Delegation

Even if we could create granular roles for every possible task, the sheer volume of agent identities and their interactions would overwhelm traditional IAM infrastructure. Furthermore, as agents begin to spawn sub-agents and delegate tasks, traditional systems struggle to track these complex chains of authority, obscuring responsibility and making it impossible to determine who, or what, is truly accountable for an action.

The Stakes: A Failure of Trust and Control

The implications of this blind spot are severe. As the CSA report warns, “The failure to address the unique identity challenges posed by AI agents… could lead to catastrophic security breaches, loss of accountability, and erosion of trust in these powerful technologies”.

Without a new approach, organizations are flying blind, unable to effectively govern the powerful new tools they are so eagerly deploying. The risk isn’t just a technical problem; it’s a fundamental business problem that threatens data security, regulatory compliance, and brand reputation.

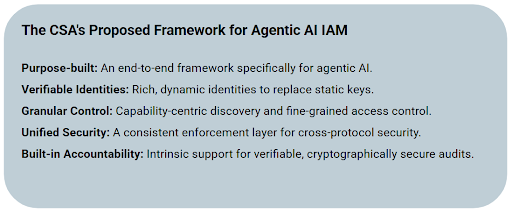

Fortunately, the path forward is becoming clearer. CSA lays the groundwork for a new paradigm built on several key principles.

Need Help with Dynamic Authorization?

Talk to our experts about data protection, access controls, and more.

In Part 2 of this series, we will shift from the ‘why’ to the ‘what’—exploring a new strategic principle that moves beyond identity to control intent, laying the foundation for secure and responsible AI adoption.